When it comes to the future of AI development, there’s one question that every ML team faces early on that has a reverberating effect on the functionality of their models. Whether they build an infrastructure that prioritizes coding to build a capable model or focus on perfecting the data.

For the most part, the answer to that question is slanted to favor the more practical and familiar option for ML practitioners, to put effort into optimizing model architectures; not the type or quality of data that will be fed into them. It’s easy to see why the model-centric approach is the preferred method in the industry. It’s an old but true trope that everyone wants to do the glamorous model work, but no one wants to do the hard and often unappreciated data work.

After all, why wouldn’t it be? It’s the approach that has received the most investment and research, with those factors going a long way in gaining trust and assurance from the ML community, who have come to view it as the most promising path forward to successful deployment. Although it’s also widely acknowledged, even more so today than ever, that the typical way of building and enhancing AI models isn’t necessarily the most efficient.

In this article, we’ll test the question of best practices for developing AI applications, emphasizing computer vision processes by breaking down conventional methods and considering how data management plays a vital role in achieving a more efficient and equitable pipeline.

From Big Data to Better Data

Just because a process has normalized doesn’t mean it leads to the best final iteration or solution, and it’s no different when it comes to the debate on data versus model prioritization. Since it’s been found that focusing solely on model training and fine-tuning leads to delaying resolutions rather than eliminating issues.

Instead of scrambling to address shortcomings in flawed data later on down the pipeline, it makes for a more resilient ML workflow to identify and resolve those concerns from a strategic standpoint early on. Until more recently, the general opinion has been that bigger datasets lead to a better product to introduce to the market and integrate into applications.

The Price of Skewed Data

With the rate and frequency of experimentation dedicated to advancing models, there’s a more current understanding that bigger data doesn’t always mean better – better data, in terms of quality and accuracy, is what really leads to the ultimate end goal of higher performance in ML projects.

In a broad sense, data scientists and ML engineers have learned that the common approach of gathering as much data as quickly as possible may initially seem to pay off, but it comes with an inevitable price. A seemingly endless list of discrepancies and dysfunctional outcomes with flawed training data as the obvious culprit.

For that reason, it’s necessary for practitioners to view data curation and optimization as a critical pre-training step, not a one-off process phase that satisfies the basic requirement of data collection and processing.

The Shift to Data-Centric

As the saying goes, old habits die hard. Still, in regards to the ML industry’s tendency to rely on expanding and optimizing architectures over the other vital component of AI system development; the use of data, it’s unnecessary to drop it altogether. The problem with this perspective is that it’s impractical and dependent on various parameters from one project to another.

Model development isn’t a one-size-fits-all endeavor. Though, one might assume that to be the case with how the AI community has treated priorities in the development process. As well as the market sectors, it has been able to impact the most through natural progression and adoption of AI technology.

Meeting Nuanced Market Needs

A few to name that stand to benefit from the standard model-centric approach are agriculture, manufacturing, security, and the automotive industries. While others may require more specialized considerations like healthcare or education.

This shortcoming shouldn’t be disregarded if ML teams want to be equipped for the needs of a growing field with a constantly expanding reach and breaching new markets that need a tailored touch. There are clear indications that the AI industry is slowly shifting to be more data-oriented, and those signs demonstrate that machine learning frameworks have reached a new level of sophistication on a broad scale.

With more complex architectures, data is suddenly at the forefront of minds and gaining well-earned respect. It’s up to companies, large and small, to pave the way to a data-centric way of thinking that rightfully honors its potential in enabling a new level of rapid growth and capability for modern CV models.

A Well-Balanced Approach

Different problems require different solutions, which also apply to data and modeling; as two separate approaches that can be deployed in tandem when constructing the framework for any ML project. Essentially, one method cannot be utilized to its full potential without the other.

A workflow that puts more importance and emphasis on improving models by expanding parameters and multiple testing iterations is an incomplete process. Only through the inclusion of thoroughly labeled and reviewed data can a model truly rise to meet the challenge of its purpose and implementation.

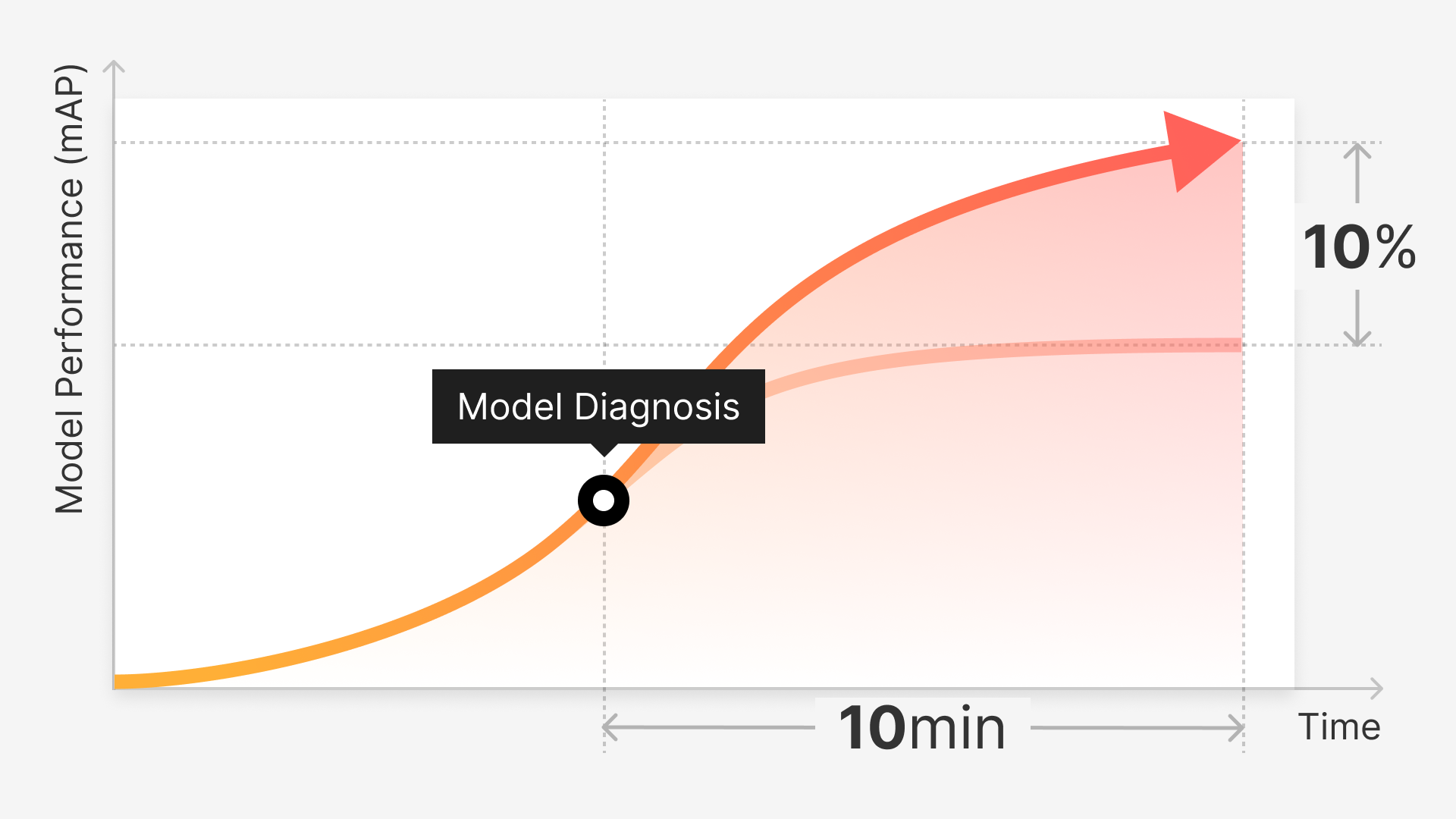

The act of dataset curation and processing can be simplified to ease the initial planning phases of model development. There now exist tools and platforms that explicitly address the pain points of annotation, eliminating noise and enhancing data overall to suit the varying requirements of a project. Among them, Superb AI’s data training platform, offers the leisure of custom automated data labeling at scale.

The Next Stage of AI

When ML teams settle for inaccurate or low-quality data resulting from inefficient data processing practices, they’re settling for a subpar model, leaving much more to desire. The ML industry is entering a new period of progression, and it’s clear that a model-centric approach isn’t always enough to scale architectures. At least, not for any practitioners that intend to step up to the next stage of AI development.

Gone are the days of haphazard handling of datasets, with the dominant belief that if a model is built well enough, dealing with data noise is a secondary concern. Dealing with problematic data pre-training is directly linked to a model’s accuracy and performance upon deployment, to the point that a project may never reach production because of quality and performance concerns.

A simple and obvious answer to preventing those concerns has always been available but understated in importance: pairing quality modeling development with higher-quality data to increase your chances of a successful AI initiative.